What is grid computing?

Grid computing refers for a cluster of decentralised computers that form a virtual supercomputer. The flexibly distributed computing power makes it possible to perform complex tasks with multiple resources simultaneously and to optimise infrastructure utilisation.

- Enterprise-grade architecture managed by experts

- Flexible solutions tailored to your requirements

- Hosted in the UK under strict data protection legislation

Grid computing: definition

Grid computing is a sub-area of distributed computing, which is a generic term for digital infrastructures consisting of autonomous computers linked in a computer network. The computer network is usually hardware-independent. This means that computers with different performance levels and equipment can be integrated into the network. Distributed applications and processes can work across devices with networked computer units. The computer units, in turn, can communicate with each other locally and across regions within the network and solve problems.

The distinction between distributed computing and grid computing is fluid. Distributed computing can refer to decentralised data processing in computer networks. Grid computing, on the other hand, refers to a virtual supercomputer that is created by connecting loosely coupled computers. This is used to handle computationally intensive processes or tasks. Linked servers and computers make their resources and computing power available to scale up to a required computer performance.

How does grid computing work?

In grid computing, the strengths of computer clusters are not centralised and are used supra-regionally in the form of grids. While computer clusters usually consist of locally limited computer networks, grid computing accesses computer capacities within a computer network on a supra-regional basis. Not only computers are networked, but also databases, hardware, software, and computing capacities. Within the framework of the grid, providers link globally and locally distributed computer resources via interfaces (nodes) and middleware. They then assign these to virtual organisations, which in turn determine which resources can take over tasks or how computing power can be optimally distributed for an application.

Grid computing is used both for commercial purposes and for scientific and economic data analysis and processing. If complex processes exceed the computing power of a computer or a local computer cluster, grid computing can help to integrate, evaluate, or display large amounts of data. Special hardware is not a prerequisite for grid computing. Rather, middleware (software for exchanging data between applications) on coupled computers ensures that free computing capacity is available within the virtual organisation.

Grid computing areas of application

Grid computing is not limited to specific application areas, as the interconnection of computer clusters can serve a wide variety of purposes. Well-known areas of application for virtual supercomputers are scientific and economic big data analyses that work with enormous amounts of data and computationally intensive simulations. This applies to research in the natural sciences and medicine, but also in meteorology, the industrial sector, or particle physics. An example of this includes the large-scale experiments of the Large Hadron Collider, CERN.

An overview of grid computing classifications

To define and classify grid computing in comparison to other technologies like cluster computing or peer-to-peer computing, three main cornerstones can help:

- Decentralised, local, and global coordination of resources such as computer clusters, data analytics, mass storage, and databases.

- Standardised, open interfaces (nodes) and middleware (protocols or protocol bundles) that connect computing units to the main grid and distribute tasks.

- Provision of non-trivial quality-of-service (QoS) to optimally distribute data streams and ensure constant scalability and reliable data transfer under high computational demands.

Beyond this, grid computing can be divided into different classifications:

- Computing grids: The most common form of grid computing, where grid users use the coupled computing power of a virtual supercomputer via grid providers to distribute or scale computationally intensive computing processes.

- Data grids: Data grids provide the computing capacity of interconnected computers to evaluate, display, transmit, share, or analyse large amounts of data via grid nodes.

- Knowledge grids: This structure uses the supercomputing capabilities of the grid to scan, connect, collect, evaluate, or structure large data sets and knowledge bases.

- Resource grids: These systems define coupled hierarchies of grid providers, grid users, and resource providers in the grid. A role model determines which resource providers can provide storage and computing capacities, data sets, software and hardware, applications, sensors, measuring devices, and other instruments via interfaces.

- Service grids: In the service grid, grid service providers make resource providers’ bundled components and capacities available to grid users as a complete service. This demonstrates that grid computing combines service orientation and computing services.

Grid computing vs. cloud computing: what’s the difference?

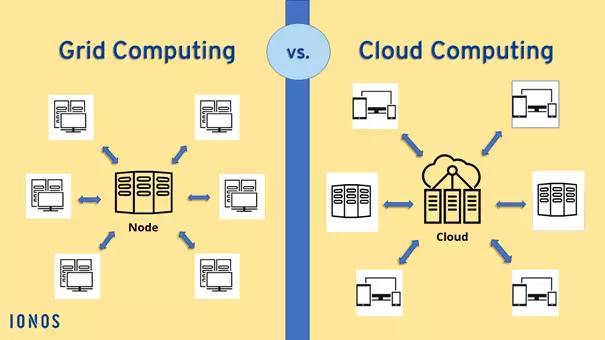

Grid computing shouldn’t be confused with cloud computing. In grid computing, several resources are linked together via non-centralised, coupled computers to form a virtual supercomputer. In this case, the grid providers own the infrastructures consisting of networked computers and applications. In cloud computing, on the other hand, cloud providers provide computing power via cloud hosting computing power, storage capacity, and service globally, although the computing occurs centrally in the cloud.

Advantages of cloud computing include outsourced, scalable IT infrastructures, cloud storage capacities, and reduced IT overhead. Companies and private users can use cloud services for a wide range of tasks cost-effectively and centrally without having to provide their own resources. Grid computing, on the other hand, offers the advantage that enormous volumes of data and complex processes can be processed, executed, and accessed cost-effectively via coupled grid capacities without the need for dedicated physical data centres.

Grid computing: advantages and disadvantages

Advantages

- Coordination and management of cross-device processes and tasks.

- Cost-effective scaling of business processes through coupled computing power and storage capacities.

- Simultaneous/parallel processing, analysis, and presentation of large amounts of data through global computer networks.

- Complex tasks can be solved faster and more effectively.

- Reliable utilisation and optimal use of IT infrastructure through virtual organisations and flexible task distribution.

- Low susceptibility to failure, as capacities are distributed flexibly and modularly in the grid

- No need for large investments in server infrastructure.

Disadvantages

- Complex administration and incompatible system components can occur.

- Computing power does not increase linearly with the number of coupled computers.