What is RankBrain? The Evolution of the Google Algorithm

What is RankBrain—and how is it changing Google Search? Since 2015, Google has been relying on the self-learning AI system RankBrain to interpret search queries. It helps in recognising user intent even with new or complex search terms and delivers relevant results. The algorithm is based on machine learning and is considered part of Google’s long-term AI strategy, which also includes DeepMind.

- Get online faster with AI tools

- Fast-track growth with AI marketing

- Save time, maximise results

What is RankBrain? The definition

RankBrain is a self-learning AI system that has been used since early 2015 as part of the overarching Google search algorithm ‘Hummingbird’. The primary task of RankBrain is to interpret keywords and search phrases with the aim of determining user intent.

According to their own data, Google receives around 8.5 billion queries daily through its web search. About 16 percent of user inputs consist of keywords and word combinations that have never been entered in that form before— including colloquial terms, neologisms, or complex long-tail phrases.

When Google refers to RankBrain as a ‘self-learning AI system’, it means artificial intelligence according to the weak AI concept. It is a technology that finds automated solutions for problems that previously had to be handled by humans. Like most systems of this kind, RankBrain also relies on machine learning techniques.

How does RankBrain work?

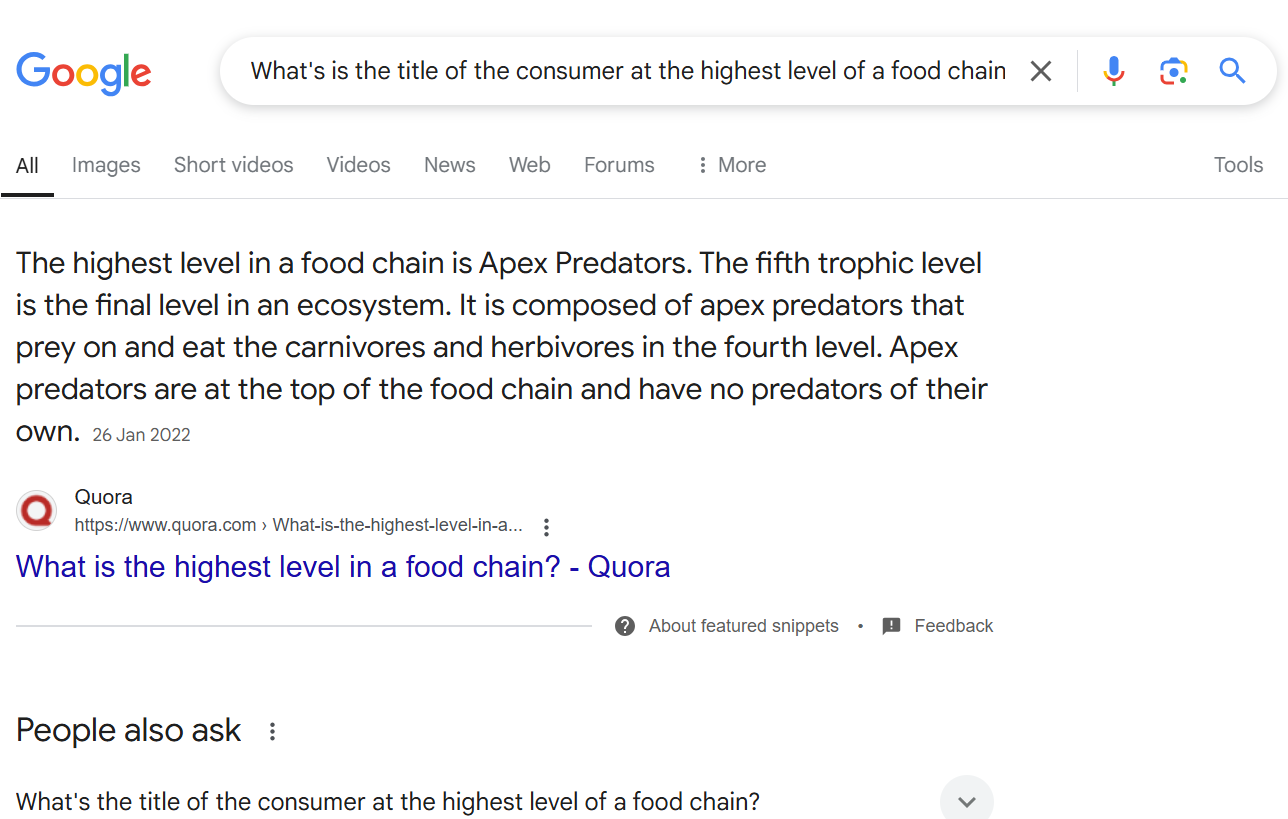

RankBrain helps Google interpret user inputs and find exactly the webpages from the Google search index—a database around 100 million gigabytes in size—that best match the user’s search intent. The AI system goes well beyond merely matching search terms. Instead of analysing each word of a query independently, RankBrain captures the semantics of the entire user input and thus determines the intent of the searcher. This way, even with a long-tail phrase, users quickly get to the answer they are hoping for.

As a machine-learning system, RankBrain draws on its experience with previous search queries. It creates connections and makes predictions based on that about what the user is searching for and how to best answer their query. The aim is to resolve ambiguities and decipher the meaning of previously unknown terms (e.g., neologisms). However, Google does not disclose exactly how RankBrain tackles this challenge. SEO experts suspect that RankBrain translates queries into a form using word vectors that allows computers to interpret contextual meanings.

What provides the basis for RankBrain’s semantic analyses?

According to statements from several Google engineers, RankBrain is partly based on concepts like Word2Vec and uses similar vector space techniques to grasp the meaning of words. In fact, Google released the open-source machine-learning software Word2Vec back in 2013, which allows for the conversion, measurement, and comparison of semantic relationships between words into a mathematical representation. The foundation of this analysis is linguistic text corpora.

Creation of the Vector Space

To ‘learn’ contextual relationships between words, Word2Vec starts by creating an “n”-dimensional vector space in which each word of the underlying text corpus (referred to as “training data”) is represented as a vector. The “n” indicates how many vector dimensions a word should be mapped to. The more dimensions chosen for the word vectors, the more relations to other words the program captures.

Adjustment of the Vector Space

In the second step, the created vector space is fed into an artificial neural network (ANN), which makes it possible to adjust it using a learning algorithm so that words used in the same context also form a similar word vector. The similarity between word vectors is calculated using the so-called cosine distance as a value between -1 and +1.

The role of Word2Vec

When Word2Vec receives a text corpus as input, it generates word vectors that reflect the semantic relationships between the words. These vectors make it possible to evaluate how closely related the words are in meaning. Faced with new input, Word2Vec can update its vector space using its learning algorithm—forming new semantic associations or revising previous ones as needed: The neural network is ‘trained’.

Officially, Google does not establish a connection between the functioning of Word2Vec and the search algorithm component RankBrain—but it can be assumed that the AI system relies on similar mathematical operations.

Using artificial neural networks, researchers attempt to simulate the organisational and processing principles of the human brain. The goal is to develop systems capable of solving problems with vagueness or ambiguity, thus taking on tasks previously reserved for humans. At Google, neural networks are employed, for example, in automatic image recognition.

RankBrain as a ranking factor in search engine optimisation (SEO)

Even more surprising than the announcement that Google’s artificial intelligence research impacts web search is how deeply this technology is embedded: Since 2016, Google has used RankBrain to interpret every search query. According to Greg Corrado, Senior Research Scientist at Google, the self-learning AI system has become the third most important ranking factor in the search algorithm.

According to Google Search Quality Senior Strategist Andrey Lipattsev, RankBrain was previously the third most important ranking factor. However, the Google algorithm has since evolved and is now complemented by BERT and other AI technologies.

For website operators and SEO experts, the perspective on keyword strategies has notably changed. As a semantic search engine, Google can leverage background knowledge in the form of concepts and relationships to determine the content’s meaning in texts and search queries. Whether a website ranks well for a specific term is less about containing that term and more about whether the website’s (text) content is relevant to the concept that RankBrain associates with the search term. The focus is not on the keyword itself, but on the content relevance of a website.

Thanks to RankBrain and the continuous development of BERT and other technologies, content relevance and user intent are increasingly the focus of search engine optimisation.

These AI modules complement RankBrain

RankBrain was introduced in 2015 and was considered a breakthrough in Google’s interpretation of search queries at that time. The technology has since evolved. Today, RankBrain remains an important part of the Google algorithm, especially in interpreting search terms and determining user intent. However, it is no longer the sole factor determining the interpretation of search queries.

BERT as support for RankBrain

Since 2019, Google has introduced BERT (Bidirectional Encoder Representations from Transformers), another AI model that complements RankBrain in processing natural language inputs. While RankBrain primarily aids in the semantic analysis of long-tail search terms and unfamiliar word combinations, BERT is more utilised for the contextualisation of complete sentences and the consideration of word meanings in their specific context.

MUM and other AI technologies for interpreting search queries

In addition to RankBrain, Google now uses other AI models like BERT and MUM (Multitask Unified Model) to better understand search queries. Especially complex or ambiguous questions benefit from these advancements. MUM is capable of combining information from various sources and formats (e.g., text and images) and putting them into a meaningful context.

Even though Google has never fully disclosed exactly how RankBrain, BERT, and MUM work together, it’s clear that semantic search technology has significantly evolved.

Important AI modules in the Google Algorithm:

- RankBrain: interprets search queries, especially new or unusual expressions

- BERT: analyses the context of words in search queries (e.g., sentence structure)

- MUM: understands complex search intentions and combines content from different formats

For search engine optimisation, this means: Classic SEO with keywords and technology alone is no longer sufficient. What truly matters now is high-quality, user-centred content that considers search intent, context, and semantic relevance.

- Our experts run your campaign

- Increase your online visibility

- Save money thanks to greater efficiency